“As a result, some of RAZR’s most iconic design features-ultrathin backlit keypad, distinguished chin, metallic finish-came to life by truly innovating around a sleek design.” The brand then started offering the phone in multiple colors, making it a fashion accessory, and eventually going on to partner with Dolce & Gabanna on a limited edition model. This focus on thinness caused us to cut the device’s thickness in half and entirely rethink how to design and architect a phone,” says Paul Pierce, executive director at Motorola Mobility, who was part of the original RAZR team. “In 2003, Motorola set out with a goal of making a device that could easily fit into your pocket. Perhaps the first cell phone to go “viral,” if you will, was the Motorola RAZR, which hit the market in 2004. But we’re all for a nostalgic blast from the past-check out eight of the most iconic cell phone designs from 2000 to 2009.

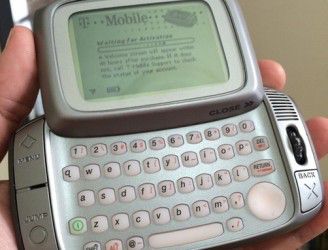

“This goes hand in hand with reducing technology-based stress in our lives with a turn to bicycles, slow cooking, yoga, healthy options in food.” Will we all go back to texting via T9? We’re not so convinced. Some people who do not use most of these features want it to be just a phone,” Constantin Boym, Pratt Institute’s chair of industrial design, tells AD. “The phone today has too many functions, too many apps, and now too many photo options. And despite smartphones being as popular as they are today, there’s even a nostalgic trend to return to the flip or slider phones of the early 2000s. Their designs were avant-garde, and became quickly intertwined with pop culture of the time- Paris Hilton is best remembered from that era clutching one of her dogs and a cell phone. If you are interested to the full details of their solution, do not miss Goral's original article.In the first decade of the new millennium, cell phones hit the mainstream for the first time, and numerous companies battled to create the next must-have accessory. The solution implemented in Sidekick fully exploits the asynchronicity inherent in this workflow and integrates the response demultiplexing step with the Markdown buffering parser.

Once the additional requests complete, Sidekick replaces the placeholders with the received information. Sidekick renders the initial response received from the LLM, including any placeholders. To prevent the user having to wait until all external services have responded, Sidekick uses the concept of "cards", which are placeholders. When those additional pieces of data are received, the LLM forges the full response, which is finally displayed to the user. In other words, based on user input, the initial response provided by the LLM also includes which other services to consult to get the information that is missing. We therefore tell LLMs to tell us when they need information beyond their grasp through the use of tools. LLMs have a good grasp of general human language and culture, but they’re not a great source of up-to-date, accurate information. Latency is, on the other hand, mostly the result of the need to make multiple LLM roundtrips to consume external data sources to extend the LLM initial response. While this solution is, in principle, relatively easy to implement manually, supporting the full Markdown specification requires using an off-the-shelf parser, says Goral. The stream processor either passes through the characters as they come in, or it updates the buffer as it encounters Markdown-like character sequences. To solve this problem, Spotify uses a buffering parser that does not emit any character after a Markdown special character and waits until either the full Markdown expression is complete, or an unexpected character is received.ĭoing this while streaming requires the use of a stateful stream processor that can consume characters one-by-one.

This implies that Markdown expressions cannot be correctly rendered until they are complete, which means that for a short period of time Markdown rendering is not correct. The same problem applies to links and all other Mardown operators. Streaming a Markdown response returned by the LLM leads to rendering jank due to the fact that special Markdown characters, like *, remain ambiguous until the full expression is received, e.g., until the closing * is received. While using a Large Language Model chatbot opens the door to innovative solutions, Spotify engineer Ates Goral argues that crafting the user experience so it is as natural as possible requires some specific efforts in order to prevent rendering jank and to reduce latency.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed